Overview

Guidelines for adapting the Task Flow

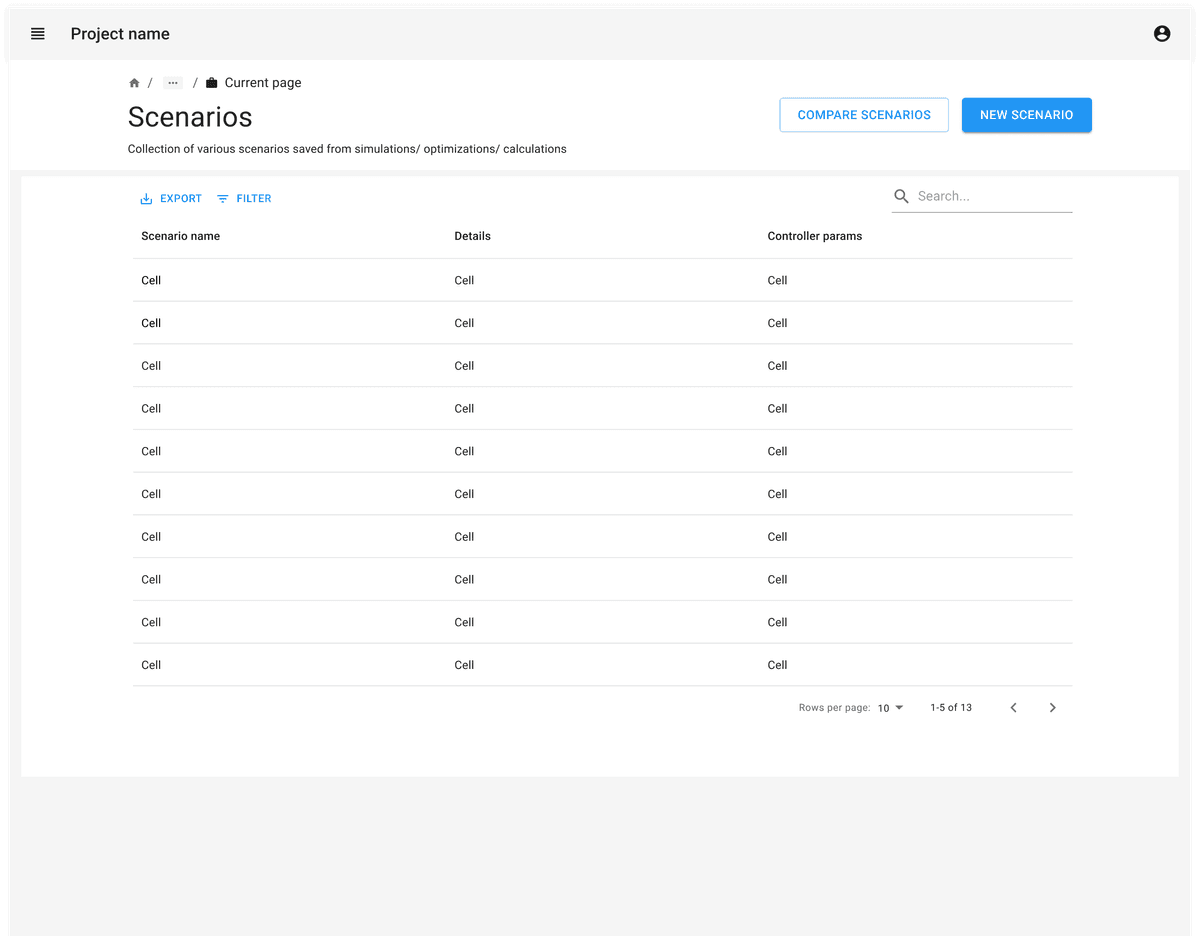

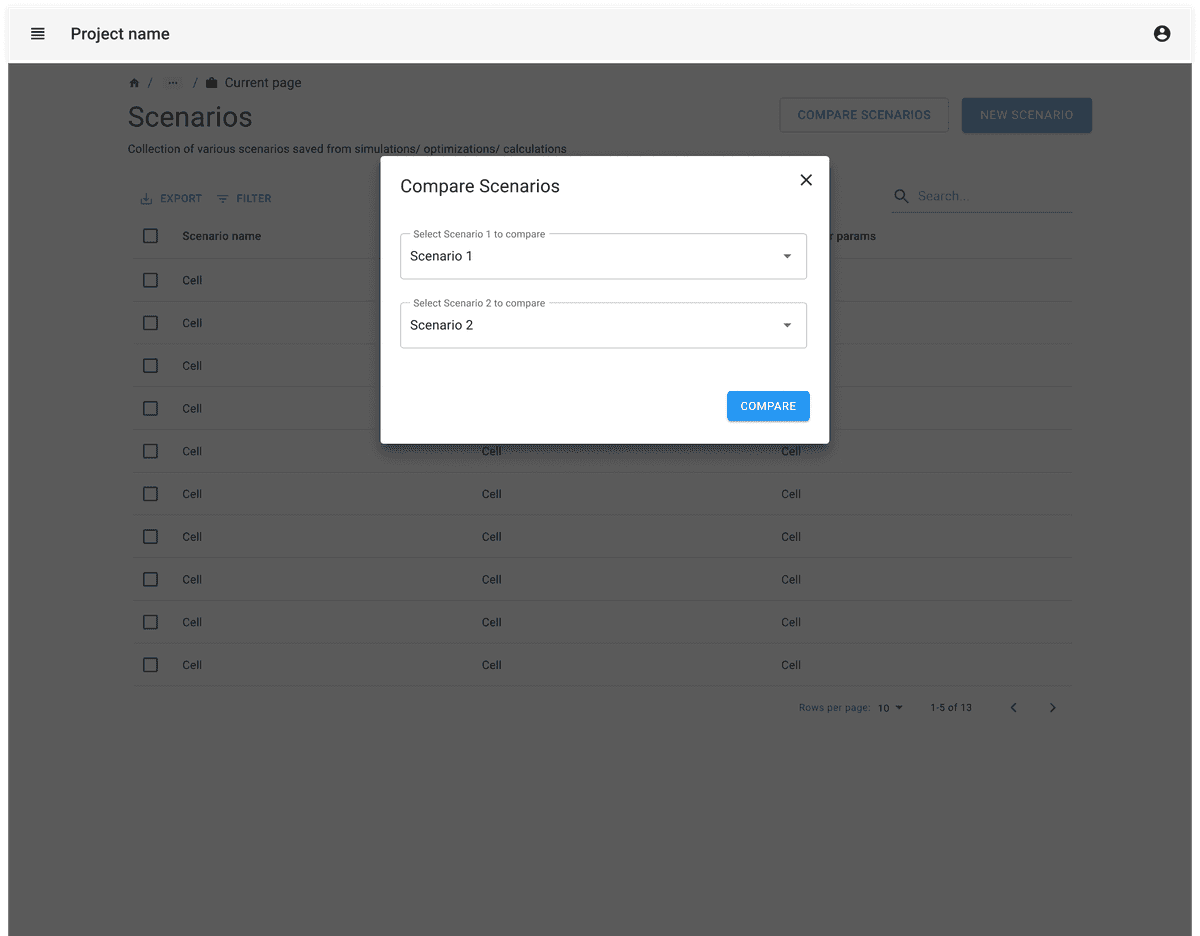

Consider whether the users would want to compare 2 sets of attributes or more than 2 sets.

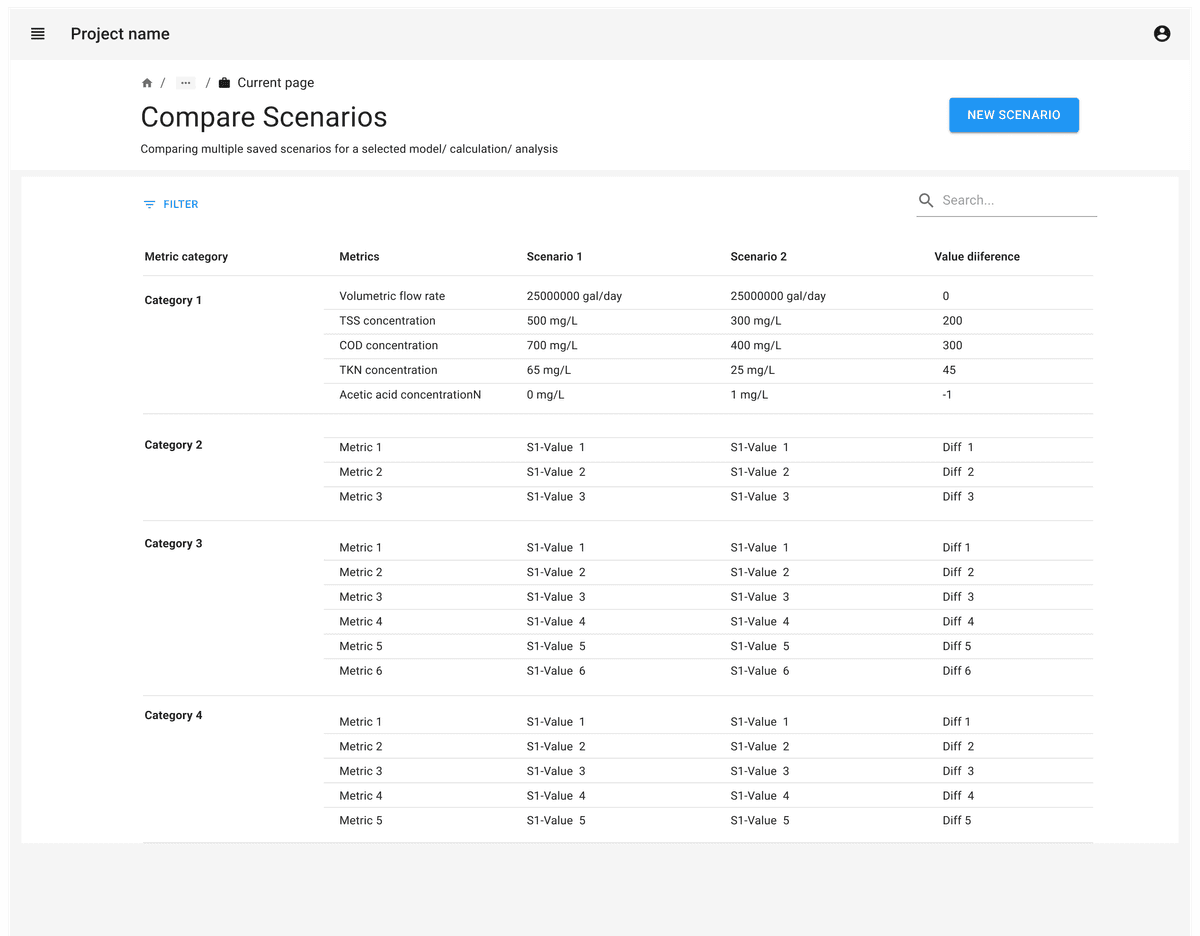

When comparing 2 sets of attributes, you can represent the differences between the sets as a subtraction between values or showing side by side values. These deltas can also be represented as a plot.

When comparing more than 2 sets of attributes, you can consider displaying values of each set as columns in a table. Also consider visualizations to aid in comparison.

Data visualizations like graphs and charts can help to highlight the differences and similarities between sets being compared.

Prioritize the attributes which are critical for comparison instead of exposing all of the attributes in a single view.

If there are too many attributes to compare, allow users to prioritize comparisons by providing ways to search & filter the attributes. This makes it easier for them to focus on their interests instead of getting overwhelmed by a large comparison matrix.

Consider offering an expanded view of a selected set or attribute to view additional related details, data tables, or diagrams. The expanded view could be displayed in a pop-up or a vertical or horizontal slide out panel.

Consider providing the ability to save or export comparison results.